Code

|

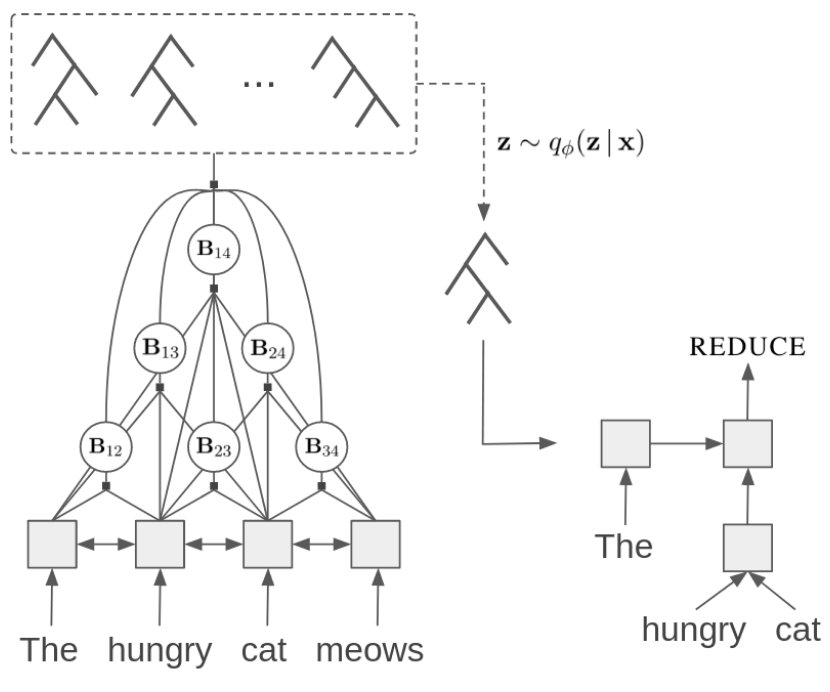

Unsupervised Recurrent Neural Network Grammars

Yoon Kim, Alexander M. Rush, Lei Yu, Adhiguna Kuncoro, Chris Dyer, Gábor Melis. github |

|

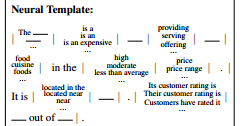

Learning Neural Templates for Text Generation

Sam Wiseman, Stuart M. Shieber, Alexander M. Rush. github |

|

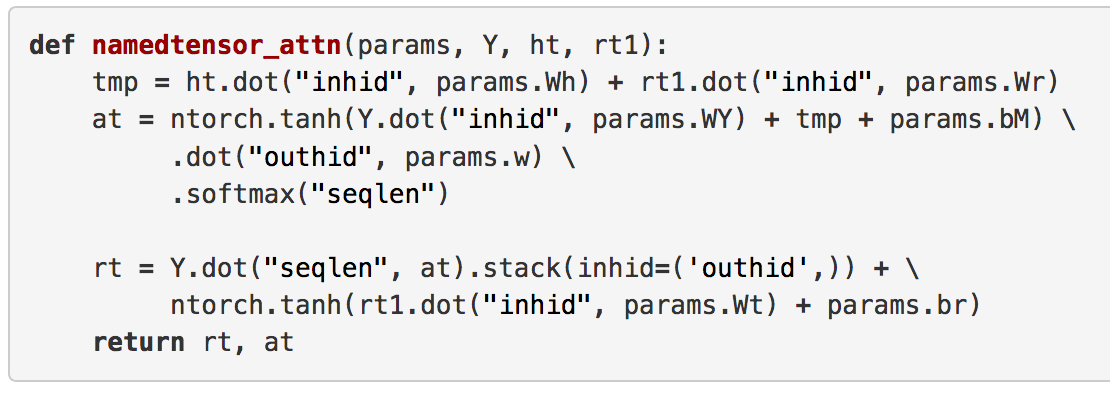

Tensor Considered Harmful

Alexander M. Rush. github |

|

Giant Language model Test Room

Hendrik Strobelt, Sebastian Gehrmann, Alexander M. Rush. github |

|

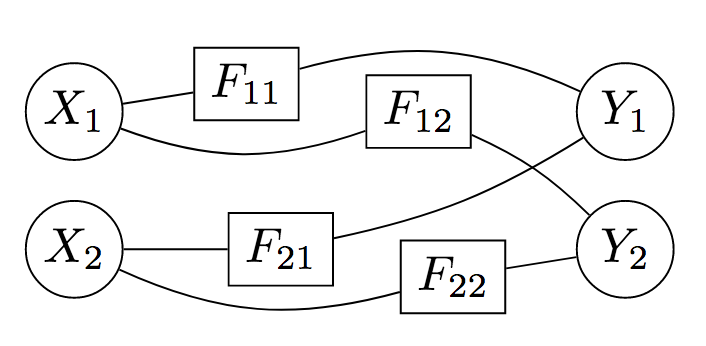

Tensor Variable Elimination for Plated Factor Graphs

Fritz Obermeyer, Eli Bingham, Martin Jankowiak, Justin Chiu, Neeraj Pradhan, Alexander M. Rush, Noah Goodman. github |

|

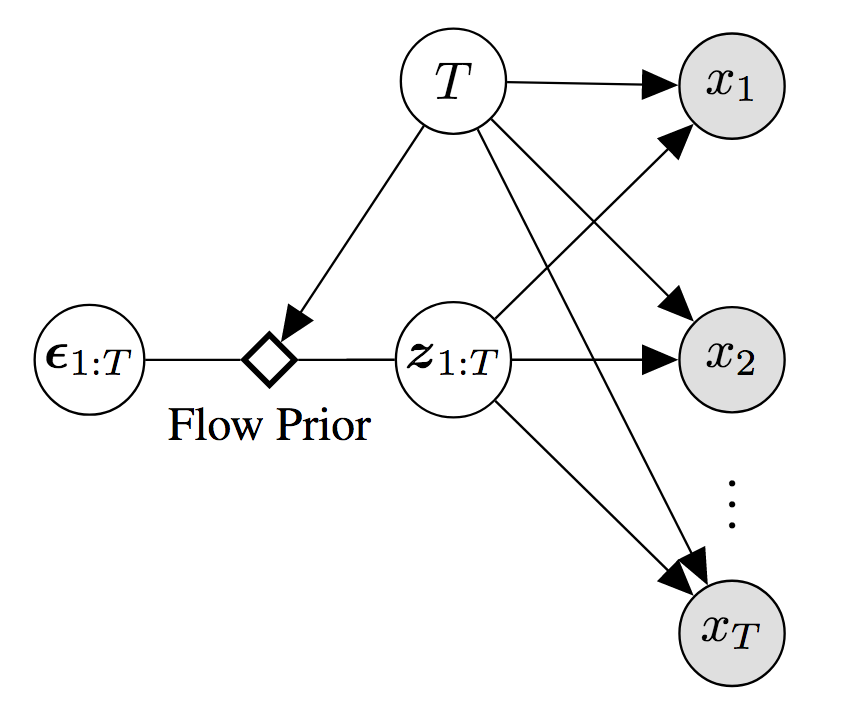

Latent Normalizing Flows for Discrete Sequences

Zachary M. Ziegler, Alexander M. Rush. github |

|

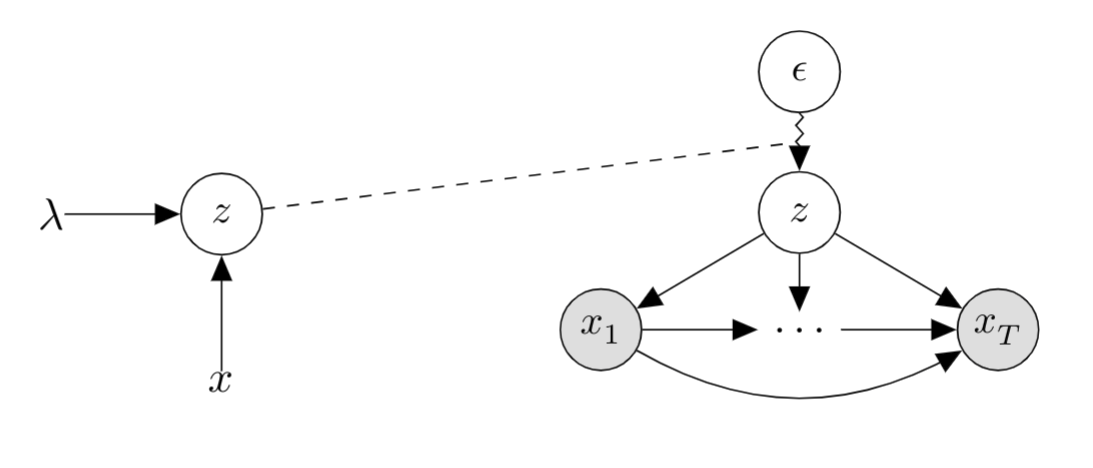

Deep Latent-Variable Models for Natural Language

Yoon Kim, Sam Wiseman, Alexander M. Rush. github |

|

End-to-End Content and Plan Selection for Data-to-Text Generation

Sebastian Gehrmann, Falcon Z. Dai, Henry Elder, Alexander M. Rush. github |

|

Variational Attention

Yuntian Deng*, Yoon Kim*, Justin Chiu, Demi Guo, Alexander M. Rush. github |

|

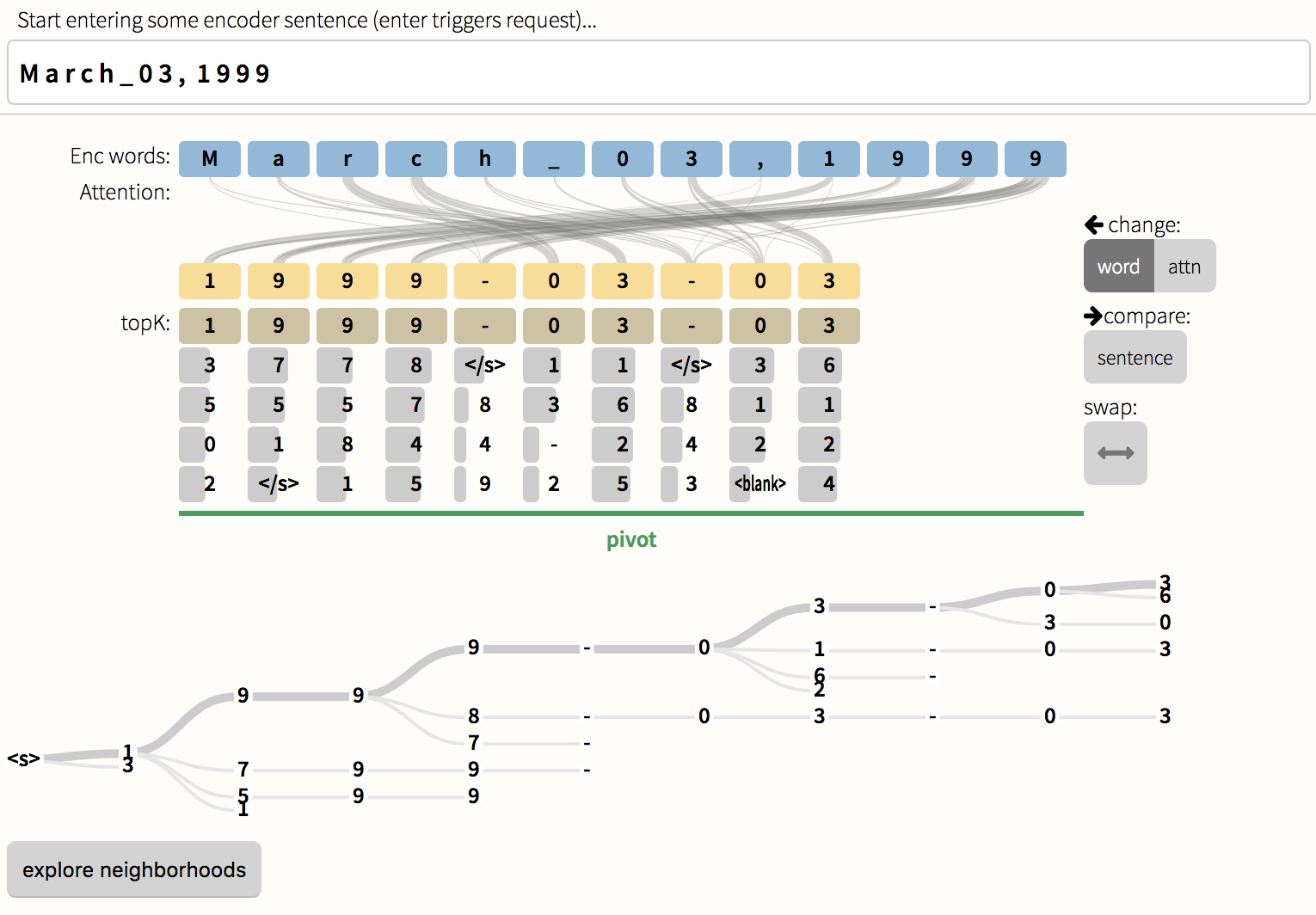

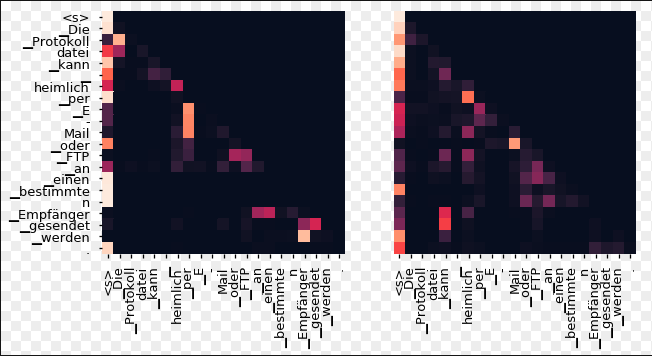

Seq2Seq Vis

Hendrik Strobelt and Sebastian Gehrmann. github |

|

OpenNMT

HarvardNLP + Systran. github data |

|

The Annotated Transformer

Alexander Rush. github |

|

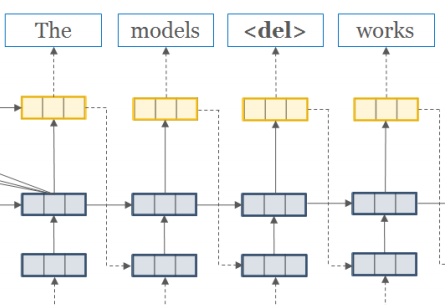

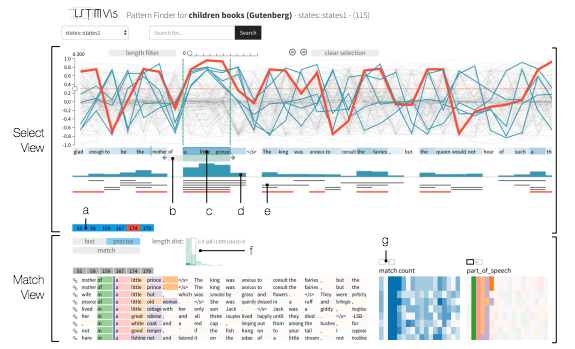

LSTMVis

Hendrik Strobelt and Sebastian Gehrmann. github models |

|

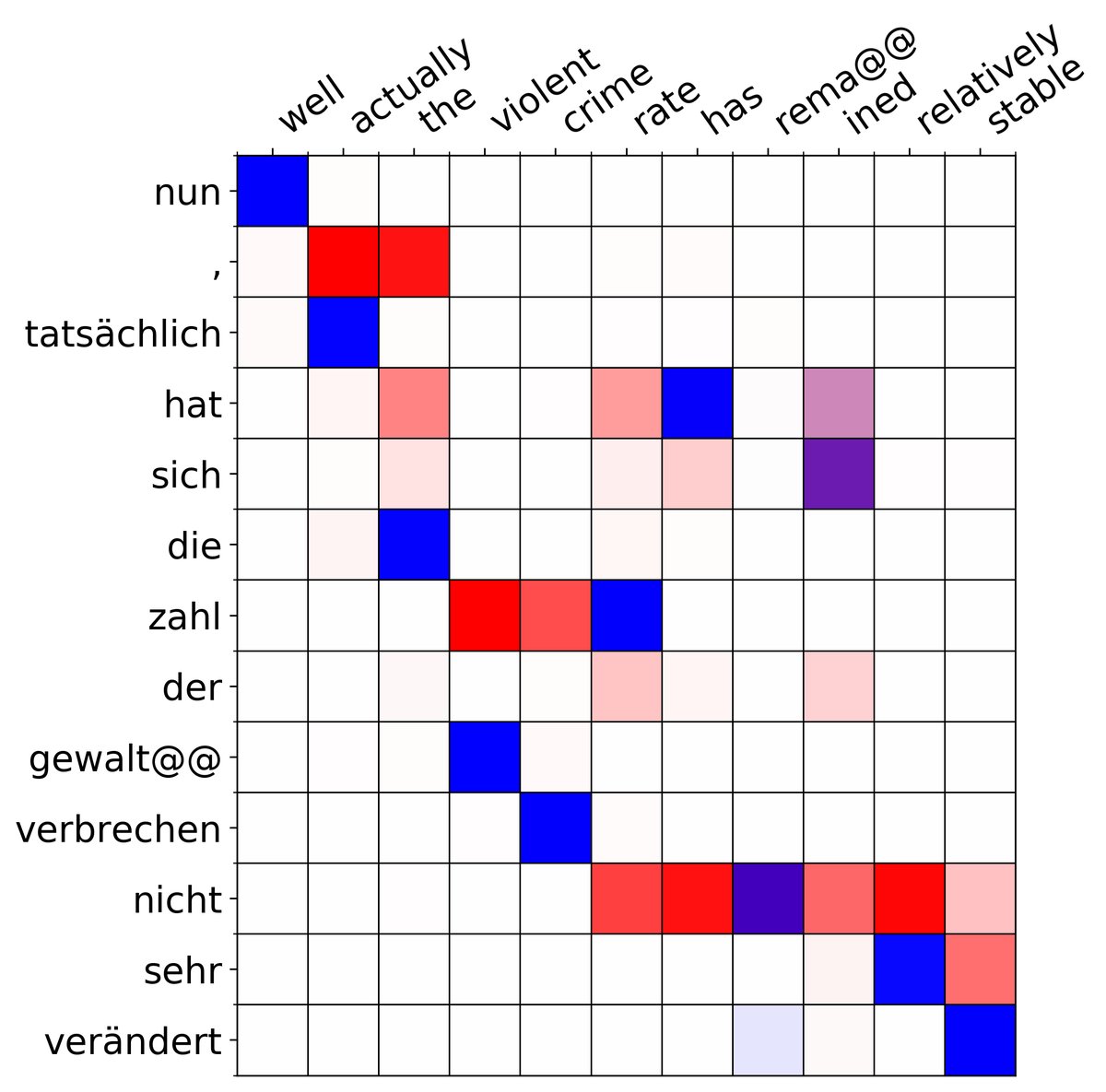

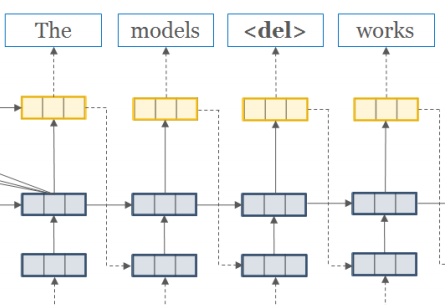

Sequence-to-Sequence with Attention

Yoon Kim. github data models |

|

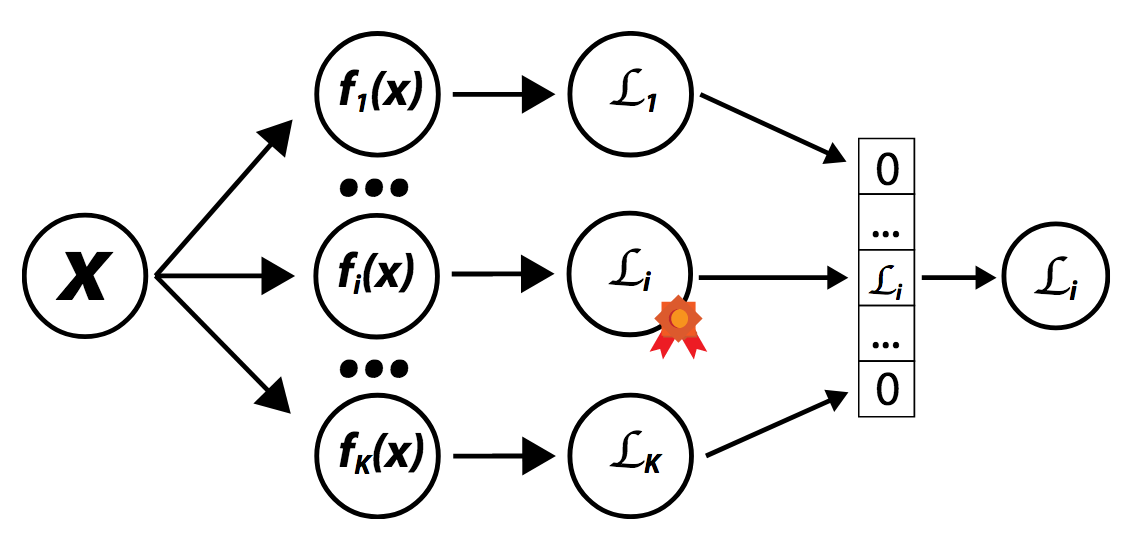

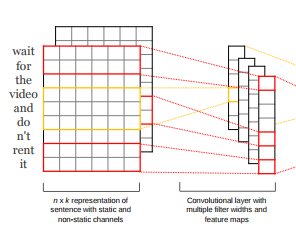

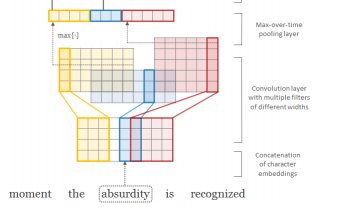

CNN for Text Clasification

Jeffrey Ling (based on code by Yoon Kim). github |

|

|

Im2Markup

Yuntian Deng. github data |

|

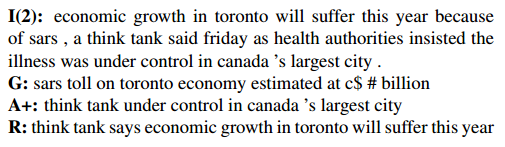

ABS: Abstractive Summarization

Alexander Rush. github data |

|

LSTM Character-Aware Language Model

Yoon Kim. github data |

|

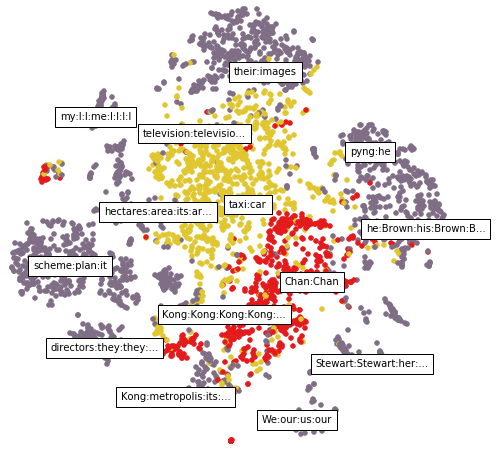

Neural Coreference Resolution

Sam Wiseman. github |

|

PAD: Phrase-structure After Dependencies

Alexander Rush and Lingpeng Kong. github |

|

Android Translation

Alexander Rush and Yoon Kim. github models |

Tutorials

If you are new to learning Torch we have a set of tutorial prepared as part of CS287 a graduate class on ML in NLP. These notebooks, prepared by Sam Wiseman and Saketh Rama, assume basic familiarity with the core aspects of Torch, and move quickly to advanced topics such memory usage, the details of the nn module, and recurrent neural networks.| General Torch Notes |

| Torch nn module Notes |

| Torch rnn Notes |

| Torch and Dynamic Programming |